Both

Zoom

and Twitter found themselves under fire this weekend for their respective issues with algorithmic bias. On Zoom, it’s an issue with the video conferencing service’s virtual backgrounds and on Twitter,

it’s an issue with the site’s photo cropping tool.

It started when

PhD student Colin Madland tweeted

about a Black faculty member’s issues with Zoom. According to Madland, whenever said faculty member would use a virtual background, Zoom would remove his head.

“We have reached out directly to the user to investigate this issue,” a Zoom spokesperson told TechCrunch. “We’re committed to providing a platform that is inclusive for all.”

pic.twitter.com/1VlHDPGVtS

Colin Madland (@colinmadland)

September 19, 2020

When discussing that issue on Twitter, however, the problems with algorithmic bias compounded when Twitter’s mobile app defaulted to only showing the image of Madlund, the white guy, in preview.

“Our team did test for bias before shipping the model and did not find evidence of racial or gender bias in our testing,” a Twitter spokesperson said in a statement to TechCrunch. “But it’s clear from these examples that we’ve got more analysis to do. We’ll continue to share what we learn, what actions we take, and will open source our analysis so others can review and replicate.”

Twitter pointed to a tweet from its chief design officer, Dantley Davis, who ran some of his own experiments.

Davis posited Madland’s facial hair

affected the result, so he removed his facial hair and the Black faculty member appeared in the cropped preview. In a later tweet

, Davis said he’s “as irritated about this as everyone else. However, I’m in a position to fix it and I will.”

Twitter also pointed to an independent analysis from Vinay Prabhu, chief scientist at Carnegie Mellon. In his experiment, he sought to see if “the cropping bias is real.”

(Results update)

White-to-Black ratio: 40:52 (92 images)

Code used:

Final annotation: https://t.co/OviLl80Eye

(I've created @cropping_bias

to run the complete the experiment. Waiting for @Twitter

to approve Dev credentials) pic.twitter.com/qN0APvUY5f

Vinay Prabhu (@vinayprabhu)

September 20, 2020

In response to the experiment,

Twitter CTO Parag Agrawal said addressing the question of whether or not cropping bias is real is “a very important question

.” In short, sometimes Twitter does crop out Black people and sometimes it doesn’t. But the fact that Twitter does it at all, even once, is enough for it to be problematic.

This tweet and thread get to the crux of what happens in work places. Marginalized folks point out traumatizing outcomes, majority group folks dedicate themselves to proving bias does not show up in 50+1% of the occasions as if 49% or 30% or 20% of the time doesn’t cause trauma.

Karla Monterroso #BLM #ClosetheCamps (@karlitaliliana)

September 20, 2020

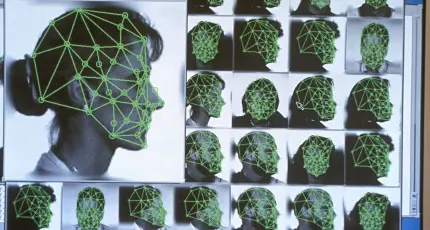

It also speaks to the bigger issue of the prevalence of bad algorithms. These same types of algorithms are what leads to biased arrests and imprisonment of Black people. They’re also the same kind of algorithms that Google used to

label photos of Black people as gorillas

and that Microsoft’s Tay bot used to become a white supremacist

.

Algorithmic accountability

简体中文

简体中文